AllClinics Agent

AI agent for customer support

Seedium developed an AI agent for a healthcare startup that automated customer support, resolving 85% of issues without human involvement and reducing annual costs by 30–35%.

Project:

AI Agent

Industry:

Healthcare, SaaS

Client:

AllClinics

Services:

AI development

Cooperation Model:

Full-cycle development

Links:

Timeframe:

Sep 2025 - Nov 2025

Product overview

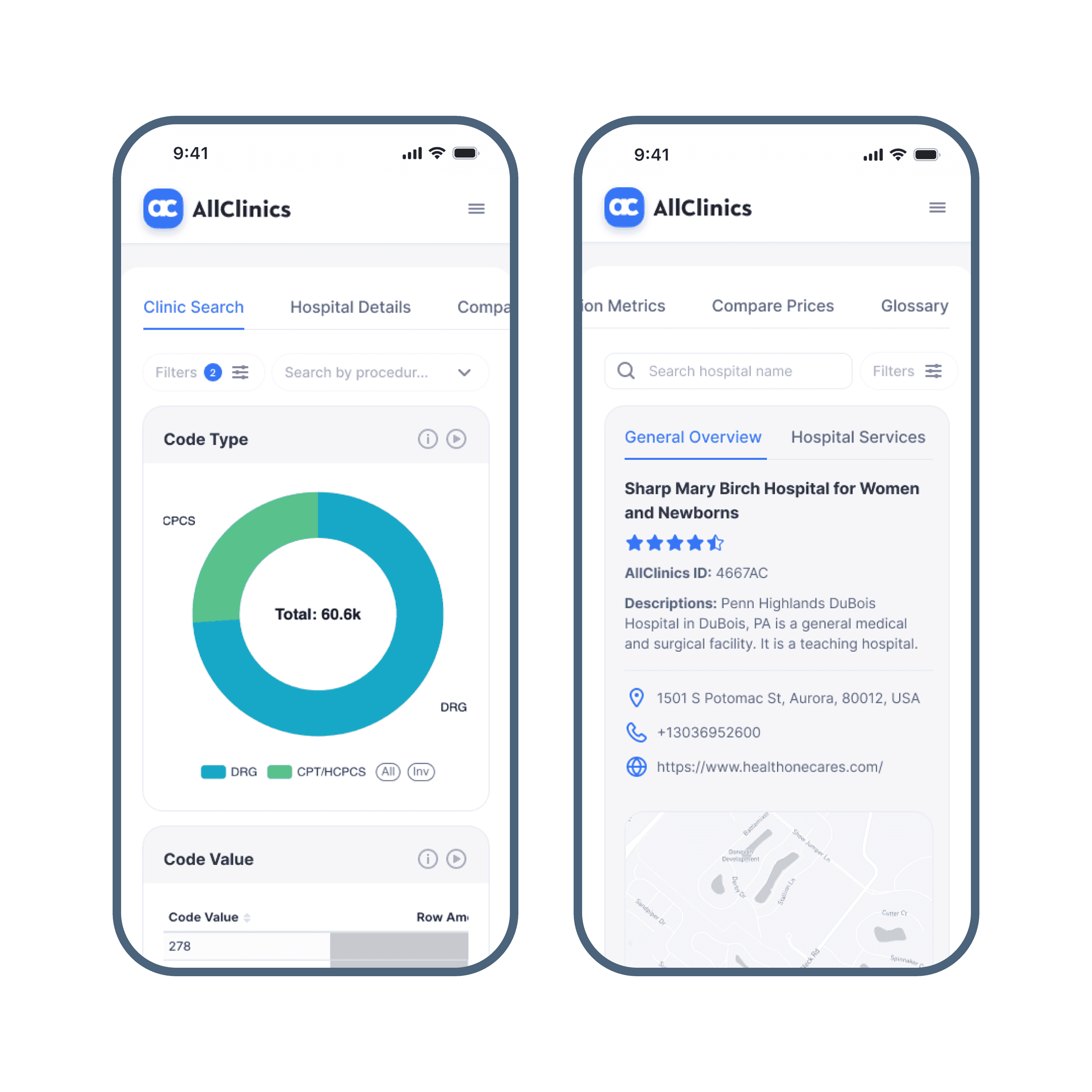

AllClinics is a healthcare market intelligence platform that aggregates data on all licensed medical organizations in the U.S. It provides users with detailed insights into hospital services, prices, insurance plans, affiliated physicians, medications, and equipment to help researchers and businesses make informed decisions.

The story behind

The Seedium team was responsible for the end-to-end development of the AllClinics platform, including a customer chatbot. As the next step, the client tasked us to migrate the chatbot to a full-fledged AI agent to enable autonomous handling of user inquiries and provide more personalized, context-aware assistance across the platform.

Work in numbers

Seedium solutions

Our team successfully migrated the chatbot from the Botpress platform to a custom RAG (Retrieval-Augmented Generation) architecture using modern LLMs.

Since the initial chatbot was built on Botpress, we reused all available assets to streamline development. Using custom scripts and the Botpress API, we exported all content in Bot.csv into CSV/JSON formats, then processed it with LangChain to transform each record into structured documents for indexing.

The new solution is built on an RAG architecture with a modular pipeline for handling user requests:

- Data processing (LangChain): manages ingestion and orchestration of AI workflows, separating indexing from retrieval and generation.

- Data ingestion: Bot.csv and other sources are loaded via LangChain loaders (CSV, text, PDF, etc.).

- Vector storage: FAISS was used for MVP, with Milvus selected for production due to its scalability and rich indexing options.

- LLM layer: responses are generated using OpenAI GPT-4o, with Anthropic Claude as an alternative model.

- Safety & policy layer: a dedicated post-generation moderation step ensures compliance with defined privacy and safety rules.

This approach allowed us to fully preserve existing Botpress assets while transitioning them into a scalable, production-ready RAG-based AI agent system.

Since the system retrieves answers directly from indexed documents stored as vectors, responses are grounded in real data, significantly reducing hallucinations and improving factual accuracy.

We integrated the rules from Privacy-Policy.txt into a dedicated Policy layer, including blacklists, topic restrictions, and PII masking. In addition, incoming requests are filtered for language and prompt injection attempts.

The Policy Agent acts as a safeguard, blocking non-compliant outputs and ensuring the system only generates responses within approved boundaries.

We configured the architecture with a focus on the cloud, using AWS, but the system is designed for flexible deployment in both cloud environments and on-premises setups.

To improve performance, we introduced a fragment-level caching layer using Redis, which reduces response time for repeated queries. We also implemented asynchronous processing and request prioritization, achieving an average latency of ~0.7 seconds.

For continuous delivery, GitHub Actions automate testing and deployment whenever rules or model configurations are updated.

The system was further validated through user testing, edge-case scenarios, adversarial prompts, and stress testing to ensure stability, reliability, and safe behavior under real-world conditions.

Project highlights

Features Implemented

RAG-based AI agent architecture

Data ingestion, retrieval, and generation pipeline

Vector search for semantic document retrieval

LLM integration

Redis-based caching

Async processing and request prioritization

Hallucination prevention

Compliance & security layer

Tech Stack

OpenAI

Claude AI

Botpress

LangChain

Hugging Face

FAISS

Milvus

The outcomes and recognition

The AI chatbot was developed and released within 5 weeks, thanks to an effective combination of existing Botpress resources and advanced LLM models. Optimization of the retrieval pipeline allowed us to achieve an accuracy rate exceeding 90%.

As a result, the client automated customer support with 24/7 availability, which led to approximately 85% of issues being resolved without human intervention. This transition is projected to reduce total support operational expenditure by 30–35% annually.

“Our approach demonstrates that it’s possible to build a reliable and secure AI agent even with modest budgets, using existing resources and modern LLM models. In the tech vision, we also considered scalability from the outset to ensure the system can grow smoothly with increasing data, users, and operational complexity without requiring major redesigns.”

More work

Mariana Dzhus

Business Development Manager

Tell us about your project needs

We'll get back to you within 24 hours